Study finds problems with NOAA’s scientific integrity in reporting ‘billion dollar disasters’

A new study finds that the data NOAA publishes lacks transparency that would allow the sources of the data to be verified. The cost figures are not adjusted for changes in wealth over time, which produces misleading results, and the agency is incorrectly attributing the trends to changes in climate.

The National Oceanic and Atmospheric Administration (NOAA) Tuesday released its final tally for 2023 of disasters exceeding $1 billion in damages. According to NOAA, there were 28 such disasters in 2023, which set the highest record since 1980 when the agency began keeping track of the figure.

The numbers receive a lot of media attention every year, and reporters present the numbers as evidence that extreme weather events are becoming more destructive as a result of climate change.

Scientific integrity

However, a new study finds that NOAA’s methodology is lacking in scientific integrity and goes against the agency’s own standards. The study also explains that the trend in billion-dollar disasters is attributed to trends in climate, which is not a proper use of disaster loss figures.

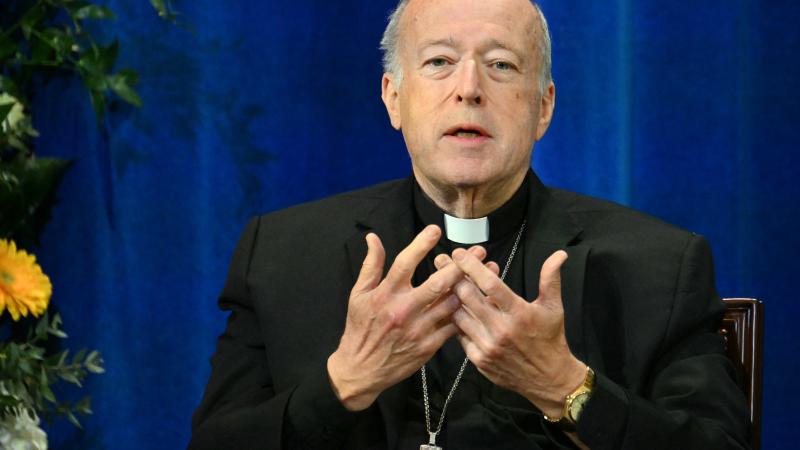

The study’s author, Dr. Roger Pielke Jr., professor of environmental studies at the University of Colorado at Boulder, has done extensive research over nearly three decades into the trends of disaster costs over time, which show the trends are actually declining.

Pielke’s research normalizes the disaster costs, which means he adjusts for differences in wealth over time. Pielke explains why this is important in an article on his “The Honest Broker” Substack. If a category 3 hurricane hit Miami Beach in 1926, it would impact far less development than a storm of equal intensity hitting the beach today. Without controlling for these differences, Pielke writes, it’s impossible to reliably determine trends.

In a preprint released last week, Pielke evaluates the methods NOAA uses to calculate billion-dollar disasters. He finds that the data NOAA publishes lacks transparency that would allow the sources of the data to be verified. This doesn’t follow the agency’s own guidelines for scientific integrity, the study notes. Likewise, the cost figures are not normalized, which produces misleading results. The agency is also, according to the study, incorrectly attributing the trends to changes in climate over time.

“Reporters on the climate beat will uncritically parrot and amplify NOAA’s claims, and before long, the dataset will find itself cited in the peer-reviewed literature,” Pielke wrote in an announcement on the paper.

An agency spokesperson told Just The News that the "billion-dollar disaster" product is based on two decades of research and close collaboration with public- and private-sector partners.

"The methodologies of the Billion Dollar Weather and Climate Disasters product are laid out in Smith and Katz, 2013, a peer-reviewed publication, and follow NOAA’s Information Quality and Scientific Integrity Policies," the spokesperson said.

Uncertainty and risk

Pielke explains in the paper, which has not yet been peer reviewed, that the intent is not to generate doubt in addressing climate change.

“The point here is not to call into question the reality or importance of human-caused climate change – it is real, and it is important,” Pielke writes.

Pielke told Just The News that study of the human influence on the climate system is about future risks and not about certainties.

“Those risks mean that there could be some really nasty surprises in store for people and the planet, or perhaps climate futures are more benign,” he said. “There is no way to tell, because predicting the long-term future is not just difficult, but we have no way to know today if we have any skill in forecasting the future. Climate change is about limiting risks.”

As he explains in the study, the figures NOAA puts out are also used by federal agencies, Congress and the president to justify energy policies. If the information doesn’t uphold standards of level of scientific integrity, the study warns, it will lead to bad decisions that fail to limit risks.

Among the problems with the billion-dollar tally, Pielke explains, is that the dataset lacks any transparency on its sources, input data or methodologies it uses. Therefore, no independent researcher can replicate the agency’s calculations to verify their accuracy.

NOAA’s Scientific Integrity Policy states that it will “ensure that data and research used to support policy decisions undergo independent peer review by qualified experts.”

The Office of Management and Budget also requires federal agencies to have public disclosure of peer-reviewed planning, which includes making the information available online.

“There is no such plan in place for the NOAA ‘billion dollar’ dataset and the methods, which have evolved over time, and results have not been subject to any public or transparent form of peer review,” Pielke writes.

The study disputes NOAA's spokesperson's claim that the methodologies are laid out in Smith and Katz 2013.

"NOAA states that it utilizes more than 'a dozen sources' to 'help capture the total, direct costs (both insured and uninsured) of the weather and climate events.'”

However, the study explains, NOAA does not specifically identify these sources in relation to specific events, how its estimates are derived from these sources, or the estimates themselves.

The study also finds that historical events are periodically added and removed from the dataset, but NOAA provides no documentation or justification for these changes.

“I am only aware of them through the happenstance of downloading the currently available dataset at different times,” Pielke writes.

Misleading at worst

Pielke’s study also notes that the loss data NOAA is disseminating is not suitable for detection and attribution of trends in extreme weather events because losses involve more than just climate factors.

“Any claim that the NOAA billion-dollar disaster dataset indicates worsening weather or worsening disasters is incomplete at best and misleading at worst,” Pielke writes.

The paper is not the first time Pielke has raised concerns about NOAA’s reporting on disaster costs. Pielke said in an interview that he has opened a dialogue with NOAA, and he was previously a fellow of a NOAA Joint Institute, the CIRES at the University of Colorado-Boulder, from 2001 to 2016.

“People in the agency have long been aware of not just my critiques, but the scientific problems underlying the ‘billion dollar disaster’ dataset. So far, this knowledge has not been sufficient to address the significant scientific integrity problems,” Pielke said.

He said it’s disappointing that such an important agency would be engaging in what he called “trafficking in clickbait grounded in bad science.”

He emphasizes that the issues he’s raising are both procedural and substantive, which is producing bad science. “It is astonishing, really,” he said.

Piekle’s study lists a number of recommendations that would improve NOAA’s information quality, including the publishing of all data, clearly describing all methodologies employed, documenting all changes made to the dataset, and subjecting the results and methods to peer review.

“The billion dollar disaster dataset is an egregious failure of scientific integrity. However, science and policy are both self-correcting,” Pielke wrote in the study.

The study is a preprint, which is a preliminary version of a scientific manuscript that researchers post online before peer review and publication in an academic journal.

Pielke was invited by the editors of Natural Hazards, a new journal, to submit the paper. Pielke said it’s likely the editors have contacted prominent people in the field to submit papers, as they want the journal to succeed, and all the papers have to go through the normal peer-review process.

“Academic publishing is highly competitive. Journals want to carve out space for themselves and publish papers that are read and discussed,” Pielke explained.

The Facts Inside Our Reporter's Notebook

Links

- disasters exceeding $1 billion in damages

- lot of media attention every year

- a new study finds that NOAAâs methodology is lacking in scientific integrity

- extensive research over nearly three decades

- why this is important

- The Honest Broker

- preprint released last week

- cited

- Scientific Integrity Policy

- CIRES